Algorithm Control – The Endgame

Please read Part 1 here:

The Machinery – How the System Works

Please read Part 2 here:

The Crimes – What Was Concretely Done

Please read Part 3 here:

Democracy Shield in Detail

Please read Part 4 here:

TikTok & Meta Policy Changes

Please read Part 5 here:

Breton vs. Musk – The Showdown

Please read Part 6 here:

The Transatlantic Censorship Alliance – Stanford-The Censorship Center

Please read Part 7 here:

Germany’s Special Role – Europe’s Censorship Center

by Michael Hollister

Exclusive published at Michael Hollister on February 18, 2026

2.718 words * 14 minutes readingtime

This analysis is made available for free – but high-quality research takes time, money, energy, and focus. If you’d like to support this work, you can do so here:

Alternatively, support my work with a Substack subscription – from as little as 5 USD/month or 40 USD/year!

Let’s build a counter-public together.

Total Information Control – What Comes If Nobody Stops It

“Control of Recommender Systems”

The Admission. The Invisible Censorship. The Perfect Authoritarian System. And Why Resistance Is Still Possible.

“We Have Control” – The Mask Falls

March 8, 2024. Brussels.

A public event: “Protecting The 2024 Elections: From Alarm to Action.”

Organized by the EU Commission. Present: Representatives from Meta, Google, TikTok, X. Plus EU officials. Plus “experts” from think tanks.

On stage: Renate Nikolay, Deputy Director-General at DG-Connect—one of the most powerful technocrats in Brussels.

Nikolay speaks about the Digital Services Act. About “systemic risks.” About the “successes” of recent years.

Then she says something that is normally never said publicly.

A sentence documented in an internal Google memo (submitted to the US House of Representatives):

“We have control of recommender systems… [but that’s] not enough… we need to go further.”

Read that again.

“We have control of recommender systems.”

Not “We regulate.” Not “We monitor.” “We have control.”

Control.

She said it. Publicly. Before witnesses. And nobody objected.

Because it’s the truth.

The EU Commission controls what 450 million Europeans see on social media.

Not directly. Not obviously. But structurally, systematically, totally.

How?

Through control of algorithms—the invisible mechanisms that determine what appears in your newsfeed, what is recommended to you, what you see.

This is the endgame.

Not burning books. Not arresting dissidents. Not closing newspapers.

But: Making information invisible.

It still exists. Technically. But nobody sees it. Because the algorithm hides it.

This is the perfect censorship of the 21st century.

And you’ve seen in the last seven parts of this series how it was built:

- Part 1: The System – DSA, Codes, NGOs, Evidence

- Part 2: The Crimes – Romania, COVID, eight elections

- Part 3: Democracy Shield – User Verification from 2027

- Part 4: TikTok/Meta Policies – concrete censorship rules

- Part 5: Breton vs. Musk – the failed intimidation attempt

- Part 6: Stanford – the transatlantic alliance

- Part 7: Germany – €1.5 bn, NetzDG, Correctiv

Now, Part 8: How everything comes together. What the endgame is. And whether resistance is still possible.

How Algorithms Shape Opinions

What is a Recommender System?

A recommender system (recommendation algorithm) is the code that decides:

- What appears in your Facebook newsfeed?

- Which videos does YouTube suggest?

- Which tweets does X show first?

- What lands on your TikTok For You Page?

You think you decide what you see?

Wrong.

The algorithm decides.

Example:

You follow 200 friends on Facebook. But Facebook doesn’t show you all posts chronologically. Instead:

The algorithm calculates a score for each post:

- How “relevant” is the post for you? (based on your previous behavior)

- How “popular” is the post? (likes, comments, shares)

- How “safe” is the post? (Safety Score – is the content “problematic”?)

Only posts with a high score appear in your feed.

The rest? Invisible.

You never see it. You don’t know it exists.

How the Safety Score Gets Manipulated

Here it gets criminal.

The Safety Score is calculated by AI models trained to recognize “problematic content.”

Who defines “problematic”?

The EU Commission. Via DSA. Via “Codes of Practice.” Via “Trusted Flaggers.”

What is marked as “problematic”?

We saw it in Part 4:

- “Marginalizing speech” (e.g., “There are only two genders”)

- “Undermines trust in institutions” (e.g., “The EU Commission is not democratically elected”)

- “Misinformation” (e.g., “COVID vaccines have side effects”)

- “Hate speech” (e.g., “Aggressive criticism of politicians”)

When a post is marked as “problematic”:

- Its Safety Score drops drastically

- Its overall score drops

- It appears in almost nobody’s feed

The post still exists. Technically. On the server. You can find it if you go directly to the poster’s profile.

But nobody sees it by chance. It’s not recommended. It doesn’t appear in search results. It disappears.

This is “demotion.”

Invisible censorship.

The Bubble Control

But it gets even more insidious.

The algorithm doesn’t just shape what you see. It shapes whom you see.

Example:

You’re conservative. You like posts about migration, sovereignty, traditional values.

The algorithm learns: “This user is conservative.”

What happens?

- Conservative posts from friends are prioritized in your feed

- Left/progressive posts from friends are deprioritized

You think your friends only post conservative things.

In reality, they post other things too—but the algorithm doesn’t show them to you.

The result:

You live in an algorithmic bubble.

Not because you chose friends who all think the same.

But because the algorithm only shows you what confirms your worldview.

But—and here it gets dystopian:

The EU Commission can manipulate this bubble at will.

Example (hypothetical, but technically possible):

Two weeks before an election:

The EU Commission instructs platforms: “Prioritize pro-EU content. Deprioritize EU-skeptical content.”

Platforms adjust the algorithms.

What happens?

- Users see more pro-EU posts (even from friends who don’t normally post like that)

- Users see fewer EU-critical posts (even from friends who normally do)

Users notice nothing.

They think: “Interesting, my friends are all suddenly becoming more pro-European.”

In reality: The algorithm was changed.

This is opinion manipulation on an industrial scale.

And Nikolay said: “We have control.”

She wasn’t lying.

How Far Does the Control Go?

Internal documents (submitted to the US Committee) show:

The EU Commission has regular meetings with platform algorithm teams:

Agenda items (from meeting protocols 2023-2024):

- “How can algorithms prioritize ‘positive narratives’?”

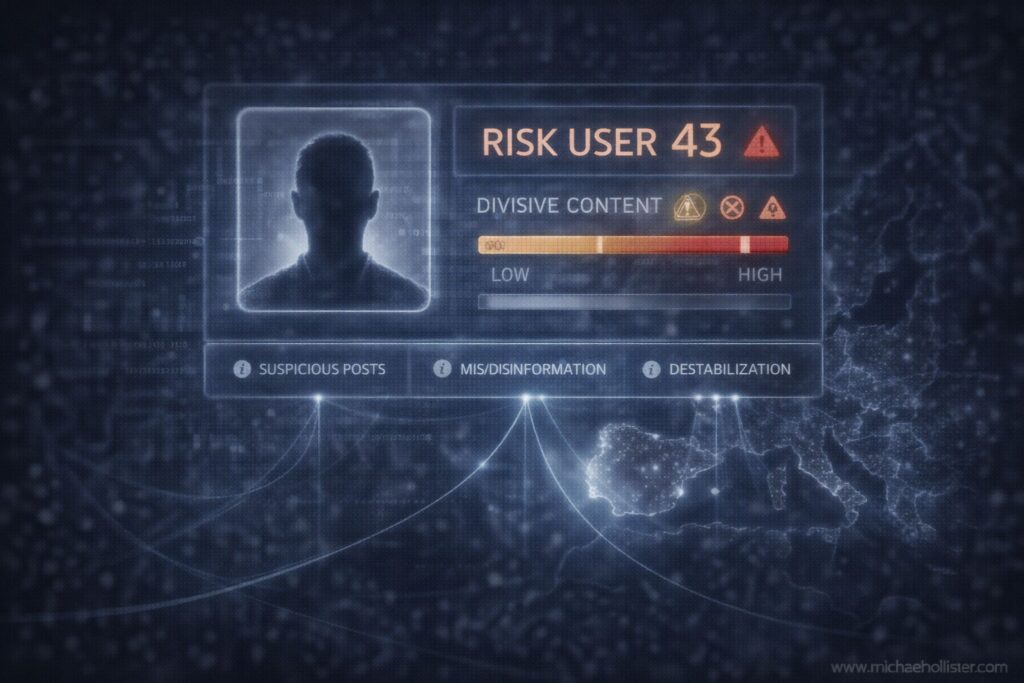

- “How can we identify and throttle ‘divisive content’?”

- “What metrics do platforms use for ‘civic discourse quality’?”

“Positive narratives” = pro-EU, pro-government, pro-establishment.

“Divisive content” = anything that polarizes (often: conservative opinions about migration, gender, EU).

The EU Commission gives platforms recommendations.

And platforms comply.

Why?

Because the alternative is €7 billion in fines.

Total Information Control – What’s Coming

Now combine everything you’ve learned in this series:

1. User Verification (from 2027): Every user is identified. No more anonymous posts.

2. Algorithm Control (now): The EU controls what people see.

3. Trusted Flaggers (270+ NGOs from 2027): Hundreds of organizations with direct censorship authority.

4. Unified Hate Speech Definition (from 2027): “Aggressive criticism of politicians” is hate speech.

5. Pre-Bunking (from 2026): Proactive propaganda against “problematic narratives” before they spread.

What does this mean together?

Scenario: Germany, Federal Election 2029

January 2029:

AfD is polling at 35%. CDU at 25%. Greens at 12%. SPD at 10%.

AfD could become the strongest party.

The establishment is in panic.

What happens:

Phase 1 (February 2029): Pre-Bunking

The European Centre for Democratic Resilience (see Part 3) launches a €50 million campaign:

- Sponsored posts on Facebook, Instagram, YouTube, TikTok: “Facts about extremism”

- Influencer partnerships: “Why I would never vote AfD”

- “Media literacy” videos: “How to recognize right-wing disinformation”

The campaign runs everywhere. Every day. Millions of impressions.

Phase 2 (March 2029): Algorithm Manipulation

Platforms adjust their algorithms:

- AfD posts are throttled by 60%

- Pro-AfD comments are algorithmically hidden

- Anti-AfD posts are prioritized

Users notice nothing. They just think: “Strange, AfD is suddenly less present.”

Phase 3 (April 2029): Trusted Flagger Offensive

270 EU-funded fact-checkers mark thousands of AfD posts as:

- “Hate speech” (for “aggressive criticism of government”)

- “Misinformation” (for “misleading statements about EU policy”)

- “Divisive content” (for “polarizing rhetoric”)

Posts are deleted or labeled with warnings. Reach collapses.

Phase 4 (May 2029): User Verification + Social Profiling

Every post is assigned to a verified user.

Platforms create profiles:

- User A: frequently posts AfD content → “High Risk User”

- User B: frequently likes AfD posts → “Medium Risk User”

“High Risk Users” get:

- All posts automatically throttled (even apolitical ones)

- Shadowban-like treatment

- Possibly account suspension for “repeated violations”

Phase 5 (June-September 2029): Total Information Control

The average voter sees:

- Many anti-AfD posts

- Few pro-AfD posts

- Many pro-establishment posts

He thinks: “AfD is losing support. The polls must be wrong.”

In reality: The algorithms were manipulated.

Election Day (September 2029):

AfD gets 28%. Instead of 35%.

CDU/SPD/Greens form coalition.

Mission accomplished.

And nobody can prove the election was manipulated.

Because the manipulation was algorithmic. Invisible. Deniable.

Is This Science Fiction?

No.

This is happening now. We documented it in Part 2: Eight European elections, 2023-2024, same pattern.

2029 will just be more perfect.

Because by then:

- User Verification is fully implemented

- Democracy Shield has been running for three years

- Algorithm control has become “normal”

Social Credit for Europe – Not Official Yet, But…

China has a Social Credit System: Citizens get points. Good behavior = points rise. Bad behavior = points fall.

Low points = no loans, no plane tickets, no good jobs.

Europe officially has no Social Credit System.

But the infrastructure already exists:

- User Verification → Every post is identifiable

- Algorithm Profiling → Platforms know who posts “problematic” content

- Trusted Flagger Reports → NGOs report “problematic users”

- DSA Penalties → Platforms must punish “repeat offenders” harder

Combined:

A user who regularly makes “problematic” posts gets:

- All posts throttled

- Account warnings

- Temporary bans

- Permanent suspension

This is functionally a Social Credit System.

It’s just not called that.

Pre-Crime Content Moderation – The AI Predicts

Platforms are already developing AI models that predict whether a post might become “problematic” before it spreads.

How it works:

- AI analyzes a new post

- AI calculates: “Probability this post goes viral AND becomes controversial”

- If probability high: Immediately throttle (before even one person sees it)

This means:

Censorship before distribution.

Not reacting to “misinformation.” But preemptively suppressing what could potentially become problematic.

This is pre-crime.

Minority Report for social media.

Is Resistance Possible?

The honest answer: Yes. But difficult.

Technically: Yes

Tools exist:

- VPNs – hide your location (but platforms increasingly block VPNs)

- Tor Browser – anonymous browsing (slow, cumbersome)

- Decentralized platforms – Mastodon, Nostr, Matrix (small user base, but functional)

- Encrypted messaging – Signal, Matrix (for private communication)

Problem:

99% of people stay on Facebook, Instagram, YouTube, TikTok.

Because their friends are there. Because it’s convenient. Because decentralized platforms are complicated.

Technical resistance only works for a small elite.

Practically: Difficult

The reality:

Most people:

- Don’t know how VPNs work

- Don’t have time to deal with Tor

- Don’t want to switch to decentralized platforms (because nobody’s there)

The system wins through convenience.

People choose comfort over freedom. Always.

Politically: Still Possible – But the Window Is Closing

What’s still possible:

1. Elections

Vote for opposition parties that want to abolish DSA, stop Democracy Shield, end NGO financing.

Problem: These parties are systematically suppressed (see Part 2).

2. Lawsuits

Constitutional complaints in Germany. Lawsuits before ECHR (European Court of Human Rights).

Problem: Courts are slow. Rulings come years later. By then the system is fully implemented.

3. Mass Protests

Millions in the streets. “We want freedom of speech back.”

Problem: Mass protests are framed as “right-wing extremist” (see Correctiv, Part 7). Media reports negatively. Public opinion is manipulated.

4. Technological Exodus

Mass switch to decentralized platforms. Make EU systems irrelevant.

Problem: Needs critical mass. As long as only 1% switch, nothing changes.

The window is closing:

January 1, 2027: User Verification becomes mandatory

After that: Anonymous resistance is impossible

We have two years.

What Individuals Can Do

Even though the system seems overwhelming:

1. Inform

Share this series. Explain to friends, family, colleagues what’s coming.

Most people don’t know.

2. Document

When your posts are deleted, censored, throttled: Screenshot. Archive. Publish.

Make visible what is made invisible.

3. Use Decentralized Platforms

Even if small: Mastodon, Nostr, Matrix. The more who switch, the bigger they become.

4. Use VPN

Not perfect, but better than nothing.

5. Donate

To organizations fighting for freedom of speech. To alternative media. To lawyers suing against DSA.

6. Vote

For parties that reject this system. Even if the election is manipulated—every vote counts.

7. Don’t Stay Silent

The most important thing.

Silence is consent.

Speak out what you think. Even if it’s “problematic.” Even if you’re throttled.

Because censorship only works when people censor themselves.

Closing Words – The Truth Remains

We’ve reached the end of this series.

Eight articles. 20,000+ words. The most comprehensive documentation of the EU censorship system ever written in German.

What you’ve learned:

- The system: DSA, Codes, NGOs, €3-5 billion, Stanford, Germany

- The crimes: Romania, COVID, eight elections, true information censored

- The endgame: User Verification, algorithm control, total information control

- The responsible: Von der Leyen, Jourova, Breton, DiResta, Kahane, Correctiv

What happens now depends on you.

You can read this series and forget.

Or you can act.

But know this:

Systems fall. Always.

The Soviet Union fell. The Third Reich fell. Every authoritarian system in history fell.

Why?

Because truth is stronger than lies. Long-term.

Short-term, censorship can win. Propaganda can work. People can stay silent.

But truth remains.

These articles remain. On servers. In archives. In the minds of those who read them.

And when the system falls one day—and it will fall—there will be documentation.

Proof that there was resistance. That not everyone stayed silent.

That is the value of this series.

Not that it changes the world. Maybe it doesn’t.

But that it bears witness.

For those who come after us.

Michael’s Story

The author of this series — Michael — is a German in exile.

He served six years in the Bundeswehr. SFOR/KFOR, Balkan peacekeeping. Under fire. In combat zones.

He fought for Germany. For the values they said they would defend.

Years later, he left the country. Because he saw how these values were betrayed.

Now he lives in South America. Builds a farm. Writes investigative journalism.

And still fights.

Not with weapons. With words. “The pen is mightier than the sword.”

This is resistance.

Not spectacular. Not heroic in the Hollywood sense.

But: Persistent. Honest. Inconvenient.

He didn’t stay silent.

And you?

End of Series

This eight-part investigative series documents the EU censorship system based on the US House of Representatives report “The Foreign Censorship Threat, Part II” (February 3, 2026).

All claims are supported by internal documents, sworn testimony, or publicly accessible sources.

Parts 1-8 are available at www.michael-hollister.com

Share this series.

Spread the information.

For inquiries about republishing this series on your website, please contact the author.

This analysis is made available for free – but high-quality research takes time, money, energy, and focus. If you’d like to support this work, you can do so here:

Alternatively, support my work with a Substack subscription – from as little as 5 USD/month or 40 USD/year!

Let’s build a counter-public together.

Michael Hollister is a geopolitical analyst and investigative journalist. He served six years in the German military, including peacekeeping deployments in the Balkans (SFOR, KFOR), followed by 14 years in IT security management. His analysis draws on primary sources to examine European militarization, Western intervention policy, and shifting power dynamics across Asia. A particular focus of his work lies in Southeast Asia, where he investigates strategic dependencies, spheres of influence, and security architectures. Hollister combines operational insider perspective with uncompromising systemic critique—beyond opinion journalism. His work appears on his bilingual website (German/English) www.michael-hollister.com, at Substack at https://michaelhollister.substack.com and in investigative outlets across the German-speaking world and the Anglosphere.

SOURCES

Publicly Available:

- U.S. House Committee Report

- DSA Text

- Democracy Shield Proposal (Nov 12, 2025)

- German Federal Budget 2024

- NetzDG

- Correctiv Reports

- Amadeu Antonio Foundation

- Stanford Internet Observatory Publications

Not Publicly Available (cited via US House Report):

- Internal Platform Documents (subpoenaed): Not publicly available, cited throughout US House Report (key pages for Part 8: pp. 34-35, 70-76, 124-134)

- EU Commission Meeting Minutes (leaked): Not publicly available, cited throughout US House Report (key pages for Part 8: pp. 29-37, 60-70, 96-123)

- Renate Nikolay Statement (March 8, 2024): Not publicly available, documented in internal Google memo cited in US House Report pages 34, 133

© Michael Hollister — All rights reserved. Redistribution, publication or reuse of this text requires express written permission from the author. For licensing inquiries, please contact the author via www.michael-hollister.com.

Newsletter

🇩🇪 Deutsch: Verstehen Sie geopolitische Zusammenhänge durch Primärquellen, historische Parallelen und dokumentierte Machtstrukturen. Monatlich, zweisprachig (DE/EN).

🇬🇧 English: Understand geopolitical contexts through primary sources, historical patterns, and documented power structures. Monthly, bilingual (DE/EN).